Summary

- Company: MIM Software

- In Collaboration With: Product, Engineering, Clinical Science, Site Development, and Sales

- Tools: Figma and Google Suite

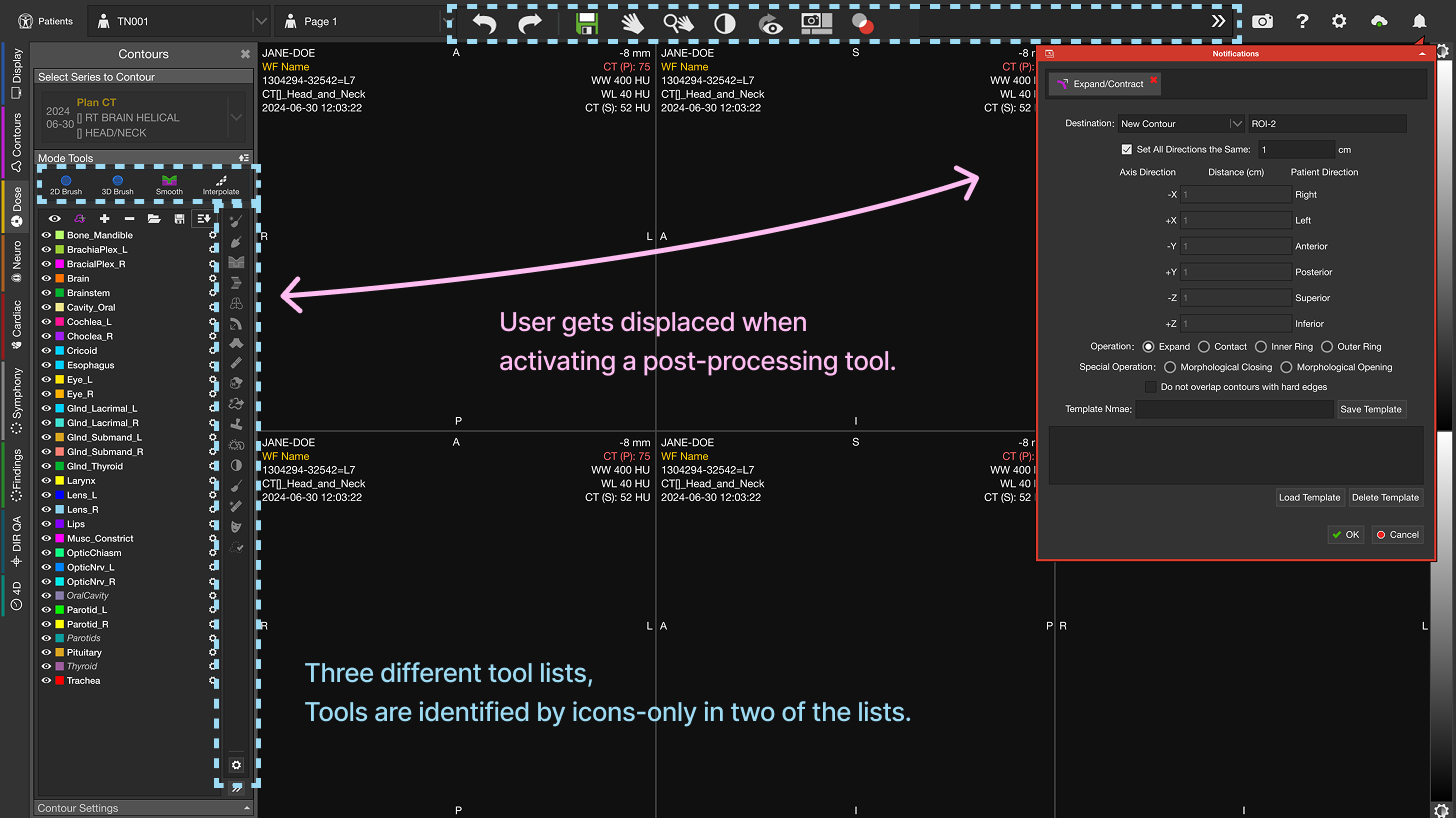

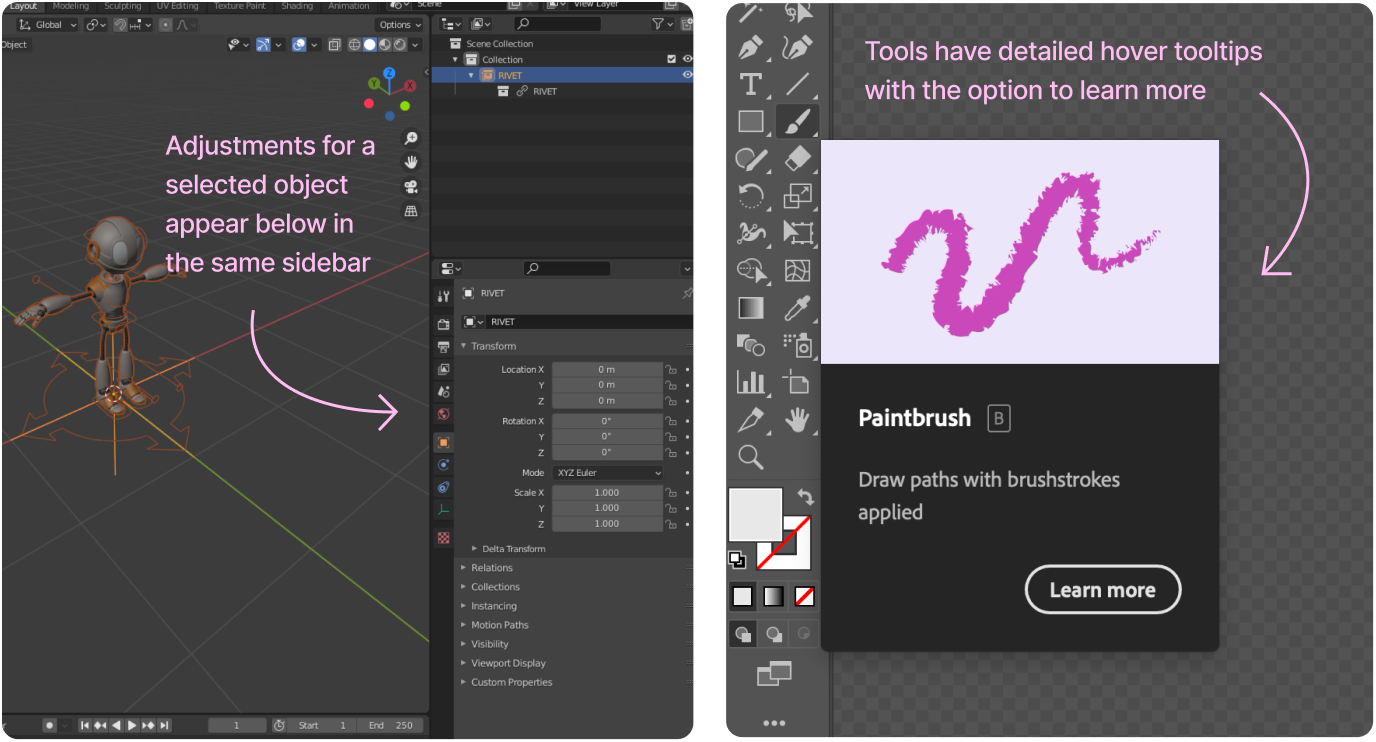

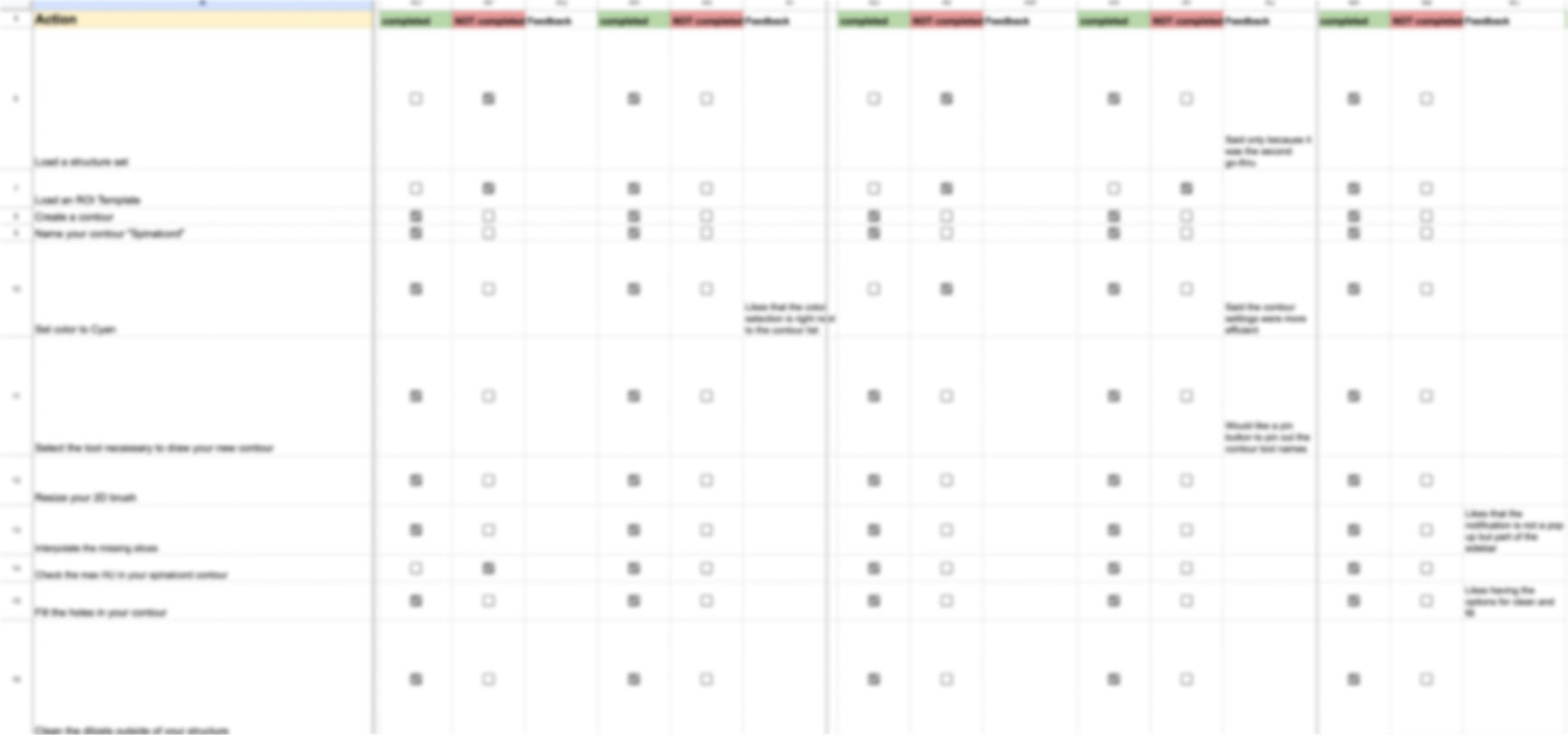

- The Problem: We believed the complexity of our contouring tools was becoming a barrier for MIM adoption amongst physicians.

- The Goal: Improve contouring accessibility for physicians, making it easier to learn and find the tools needed for daily work.